Today will be a lot about AI models… but you could expect that if the name “ChatGPT” has crossed your mind. We will also discuss Monoliths (in two different contexts) and the so-called SRE.

1. Galactica, Cicero, pix2pix, ChatGPT – the hottest week ever for AI models?

Usually, when we report on AI models it’s in the context of what an electrifying impression they make. However, we have to be honest with ourselves that historically this has been a mixed bag. Perhaps one of the most spectacular failures should be considered Tay from Microsoft – a chatbot that lived on Twitter and learned human behavior from that platform. If the use of the phrases “learning human behavior” and “Twitter” in the same sentence makes you a little nervous, congratulations – you’ve hit the jackpot.

Tay quickly absorbed the most extreme behavior, started praising Hitler (as Kayne West did), and was generally a huge PR failure for the parent company, which quickly had to withdraw from the project. Since then, everyone has been rather careful to make sure that such experiments don’t blow up in our faces, and we have far fewer similar situations – but sometimes something still slips through. This time it happened to Facebook.

The company created a model called Galactica. It was planned to be (intentional emphase) for the scientific world usage, trained on 48 million scientific articles, websites, textbooks, lecture notes, and encyclopedias. It was intended to help academics by summarizing papers, solving math problems, writing scientific code, or annotating molecules and proteins. The whole thing lasted 3 days, because shortly after its release it was found that, despite having a patch of “scientificness,” the model “hallucinates” and generates unverified information. In addition, the fact that it has the label of being a “reliable source” was considered insanely dangerous by the scientific community. Therefore, this “demo” (as communicated by Meta’s PR) was quickly withdrawn. The whole matter is brilliantly described by MIT Technology Review.

Meta in general hasn’t been getting the best press lately, but that doesn’t mean the company is somehow anti-midas. It is still able to show us many impressive projects. As a fan of board games, I pick my jaw up off the floor seeing more and more of the most complex games being outright destroyed by AI. It started with chess (if you’re curious about the story of Deep Blue’s duel with Kasparov, I have a 2h documentary on the topic), then there was Go, but slowly more and more modern titles are also affected. This time it is time for Diplomacy – a game that has been credited with modeling the complexity of international relations (in a simplified form of course) quite well since the 1960s. 360 minutes for a single game, as reported by Board Game Geek is impressive. It turns out that a new model – Cicero – created by Mete can win in Diplomacy with even the most advanced human players.

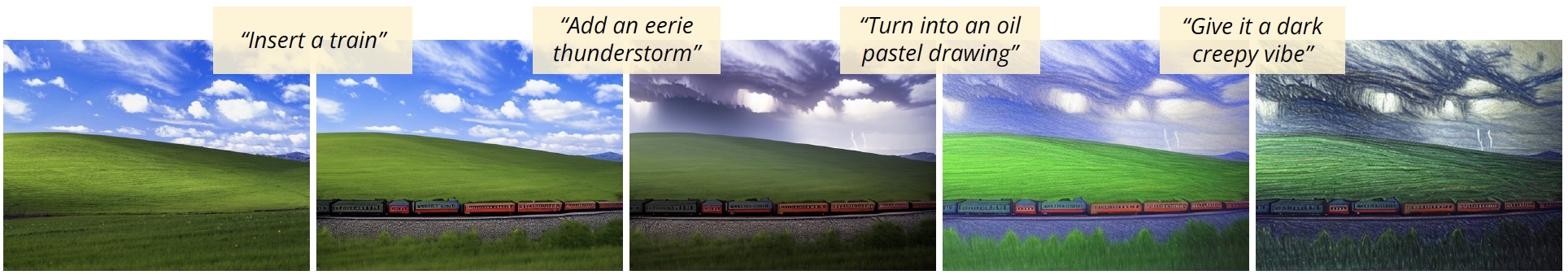

It doesn’t stop there, however, as the University of California is slowly making my dreams come true when it comes to the flexibility of AI image generation. This is because their latest model, pix2pix, solves the biggest drawback to date – the inability to take a more iterative approach in the creative process. After all, while generating content from scratch or even so-called in-painting (catching up on missing parts of an image), everything works great. However, I’ll admit that often, while creating such a foundation, I’m not quite sure what I’d like to achieve. The power of pix2pix is that it allows you to ask commands in the context of a given image input. The whole thing sounds like the well-known Style Transfer, but in fact, the way of working is similar to that with a human graphic designer. Anyway, see for yourself:

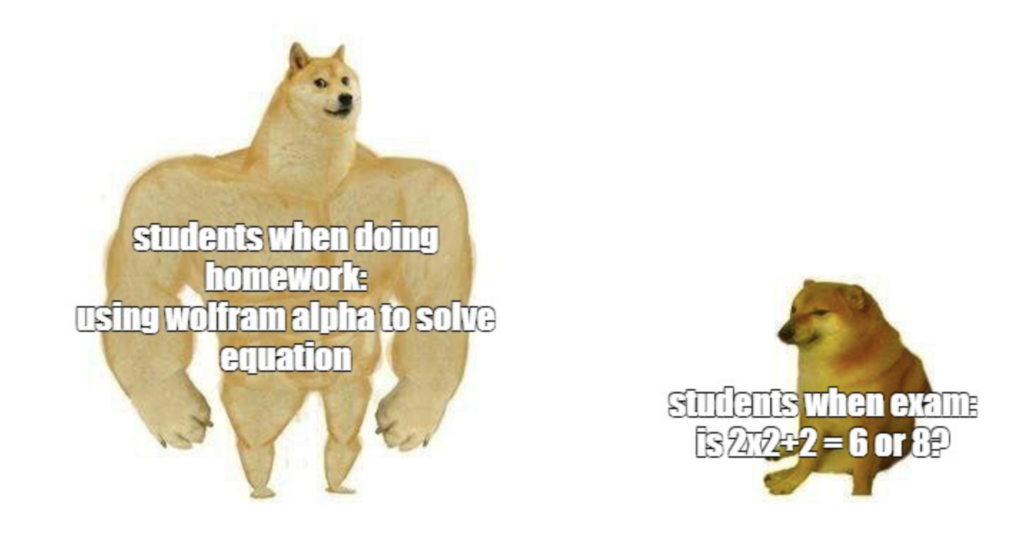

Well, okay, but all the other things above are pure curiosities compared to what OpenAI has shown. First, it released a new GPT-3 text-davinci-003, which some commenters refer to as GPT-3.5. The whole thing is capable of generating longer, more complex texts, with a level similar to, say, academic dissertations. For now, we are still dealing with publications that do not have some kind of unique authorial soul, but work on “style transfer” for text is also already underway, so it’s probably a matter of time. There are already claims that the concept of school homework assignments is dead – students have access to such a class of tools that they can basically generate most of the work for themselves.

But it was ChatGPT that blew our brains out, and the Internet went crazy as it did after the release of Stable Diffusion. This is because we are talking about the most advanced chatbot ever, doing real wonders. You can find examples in the following thread:

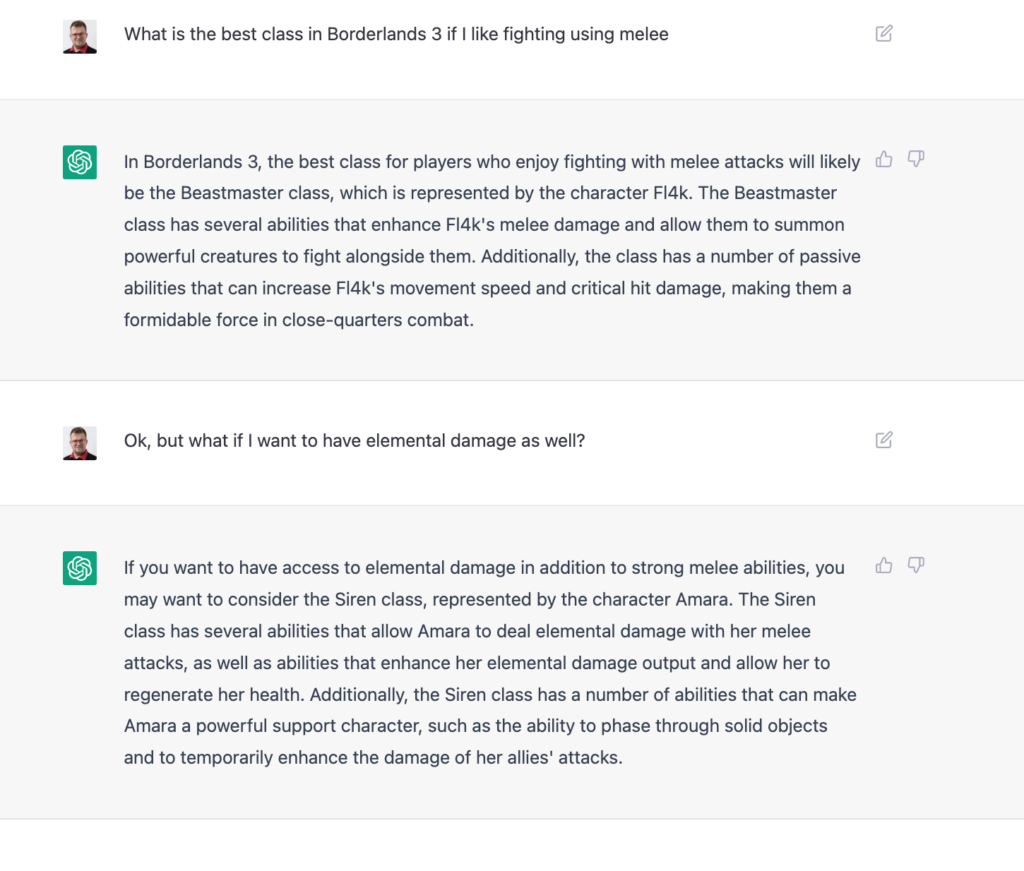

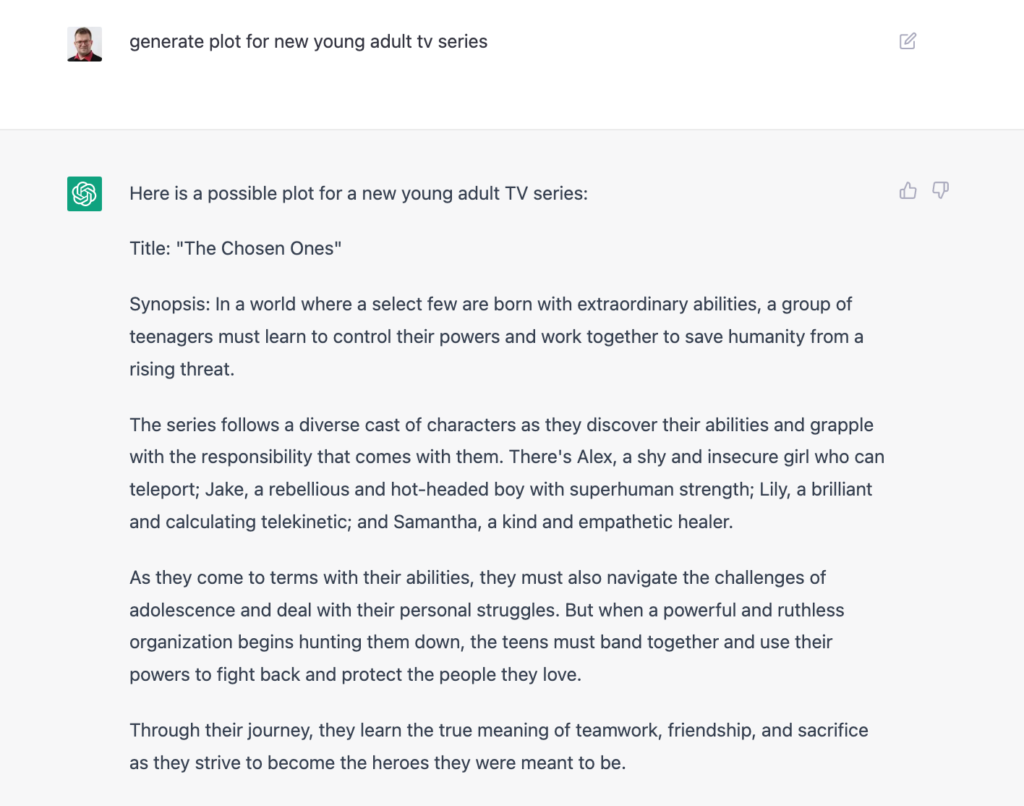

I also played around a bit and well… I’m impressed:

It is good….

Some say that for the first time in its history, Google really has someone to fear.

Anyway, what am I going to say, he has already said the newsletter I mentioned a week ago, The Algorithmic Bridge, which has prepared what I think is the most accessible introduction to ChatGPT. AI has been more of a “hype” than something useful for years, but in 2022 OpenAI proved that “Blessed are those who have not seen and yet have come to believe.” It’s true that ChatGPT can also fail like the aforementioned Galactica, but when it manages to do something right, it is mindblowing. Anyway, anyone can try it, as the bot is available at chat.openai.com.

Sources

- Why Meta’s latest large language model survived only three days online

- Twitter taught Microsoft’s AI chatbot to be a racist asshole in less than a day

- text-davinci-003

- CICERO: An AI agent that negotiates, persuades, and cooperates with people

- ChatGPT

- pix2pix

- ChatGPT Is the World’s Best Chatbot

2. Glorious Monolith (counter)attacks

Alright, we’ve reached the stars, but I’d like to come back to earth now and touch on at least one more topic. We had Black Friday and despite the recession, shopping records were being broken. Shopify decided to share its results, stirring up some buzz online in the process. Well, because let’s be honest, 1.27 million requests per second or 3 Terabytes of uploaded data per minute must work on the imagination.

The numbers are impressive, especially since Shopify is known for two technology choices. First, it is primarily a Ruby Shop, using Rails. Secondly, their application is a monolith, which is nevertheless able to handle the above traffic. As a result, there is a renewed debate on the Internet as to which architecture is best – examples can be found on Reddit or on Twitter. Although I personally wonder a bit whether you can call Monolith something that has Kafka in it.

The heated atmosphere of discussion is further warmed up by Elon Musk. Does it happen to you that your boss comes with some brilliant – from his point of view – idea when it comes to the architecture of a system he didn’t write? Then you probably understand that Elon’s public rants about microservices being “unnecessary to anyone” aroused both laughter and horror at the same time. However, Musk got his way and proclaimed success:

It’s hard to verify the claims because we don’t know what really changed in terms of the system’s architecture (maybe just the lack of advertisers was a blessing for the system’s performance), but thanks to moves like these, Twitter is continuing to be one of the most discussed topics of the engineering world. And since, according to many commentators, plarform does indeed run faster (although I’d like some empirical evidence of this), monolith fans have been given some ammunition in their hands.

While we’re on the subject of Twitter, the biggest surprise to everyone around is that the platform is still – despite layoffs and chaos – operating. So were those who said that Twitter was overgrown as an organization right? Possibly, but it was certainly also simply mature in terms of engineering practices. There is a great deal of talk that Twitter’s success (in the sense – that it survived the recent layoffs and continues to function) is really the success of the Site-Reliability Engineering approach that was popular within the company. At least two trending publications on the web were devoted to this topic: Why Twitter Didn’t Go Down: From a Real Twitter SRE and Will Twitter Infrastructure Go Down?, so if you are curious about the details, I recommend either of them. Additionally, the so-called SRE Book from Google remains one of my favorite items on software engineering, and since it’s available to everyone for free, I just recommend giving it a try. It’s the best source if you want to find out what all this SRE really brings to the best practices of our industry.

It will be interesting to see when Elon gets tired of Twitter – after all, his Neuralink announces that he will start human testing soon.

Sources

- Reddit: Shopify monolith served 1.27 Million requests per second during Black Friday

- Why Twitter Didn’t Go Down: From a Real Twitter SRE

- Will Twitter Infrastructure Go Down?

Bonus: Advent of Code has begun

Advent of Code is an event that publishes short programming puzzles every day from December 1 to 25. This year’s edition is the seventh in a row.

Each puzzle is divided into 2 stages and the user is rewarded with a star for solving each of them. The puzzles have no restrictions on programming language, testing or concurrency – just like in college, only the final score counts. This doesn’t mean that performance isn’t important – usually naive solutions count from a few hours to even a few days (and nothing hurts more than an error thrown after a few hours of running the program).

We have been playing with the group for 3 days now, and if you want to join – you are welcome:

👧 Facebook Group 📈 Vived’s Leaderboard (join code: 2276325-be92402e)

And to somehow, at the end, connect this to our main topic… I was playing with ChatGPT today, and you know what? It’s impressive.