A week ago, we didn’t have our review (I was speaking at the Boiling Frogs conference and simply ran out of time), so now I’m suffering as a punishment – there’s so much material that I didn’t know which topics to choose. So I decided to make a… calendar.

1. What’s interesting in Large Models – The Calendar

It’s enough that I’ve been away for two weeks, and 🤯.

Therefore, now in the form of a calendar, we will go through the most important events more or less since the beginning of March…. and we’ll come out with a surprisingly long section.

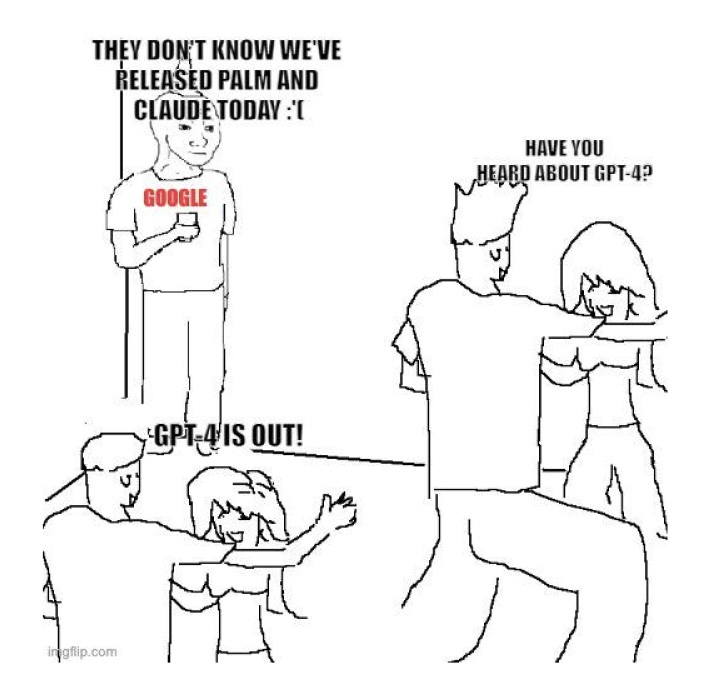

10.03.2023 – Google PaLM-E

Google has launched an API for one of its most advanced language models, PaLM, which is similar to GPT or Meta’s LLaMA models. PaLM can perform many text generation and editing tasks, from writing code to generating summaries or even training conversational chatbots.

In addition to the PaLM API, Google has released an app called MakerSuite that makes it easier for developers to train PaLMs to perform specific tasks, allowing them to iterate over incentives and populate datasets with synthetic data. Google also expanded support for generative AI in its Vertex AI platform and launched a new platform called Generative AI App Builder, which allows developers to quickly create new AI-enriched applications such as bots, digital assistants and custom search engines, all that using access to Google’s core model APIs and ready-made templates. PALM-E-related features will also make their way to Google Workspace.

14.03.2023 – GPT-4

However, everyone forgot about PALM-E less than a week later, as OpenAI showed off GPT-4. The model is supposed to be more creative and collaborative than ever before, and can solve difficult problems with greater accuracy. It is also capable of processing both text and image inputs, although for now, it can only respond with text. OpenAI has also partnered with companies such as Duolingo, Stripe, and Khan Academy to integrate GPT-4 into their products. Most importantly, however, anyone is able to test the model as part of ChatGPT Plus, a $20 monthly subscription.

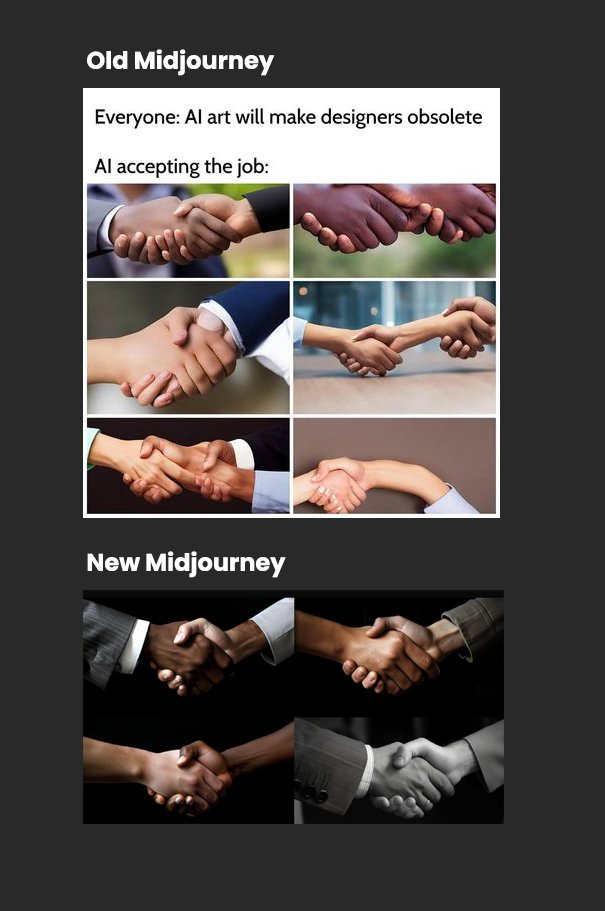

15.03.2023 – MidJourney v5

Only a day after the release of GPT-4, a new version of my beloved Midjourney V5 fell into our hands. It offers higher image quality, a more diverse range of styles and “seamless” textures. It can also generate more realistic images of hands and eyes, and reportedly is more precise and responsive to user prompts. Some have described the results as photorealistic and “extremely fascinating,” but others are concerned about the “uncanny valley” effect and the possibility of generating fake images that could pass for authenticity test.

Midjourney’s founder warns that the new version can generate more realistic images than any previous version, and has increased the number of moderators to enforce community standards with greater strictness and rigor.

16.03.2023 – Microsoft 365 Copilot

Just a day later, Microsoft announced another tool, Microsoft 365 Copilot. The software combines OpenAI models with Microsoft Graph data and Microsoft 365 applications, creating a productivity tool that responds to natural language queries. Copilot offers three main benefits: it helps save time by serving as a prompt for writing or creating presentations; increases productivity by summarizing emails and generating suggested responses. Copilot will be available in Word, Excel, PowerPoint, Outlook, Teams, Power Platform, Viva, and other programs, and will be introduced in the coming months

21.03.2023 – Google Bard becomes available to the public

Bard from Google has finally been made available to the public as a Preview in the United States and the United Kingdom. Users can ask general questions to it and receive answers, although Google stresses that it is not a replacement for its search engine.

Unlike the Chatbot available in Bing, Bard does not scan websites, and uses only its model to generate responses. A very interesting comparison of the capabilities of the three tools was prepared by The Verge in the text AI chatbots compared: Bard vs. Bing vs. ChatGPT.

22.03.2023 – GitHub Copilot X

GitHub has announced GitHub Copilot X. The new changes include the addition of a chat interface, GitHub Copilot Chat, integrated with the editor, capable of delivering in-depth analysis and explanations of code blocks, generating unit tests, and even suggesting bug fixes.

GitHub Copilot X also includes Copilot for Pull Requests, which uses the new OpenAI GPT-4 model to generate descriptions for pull requests, as well as Copilot for documentation.

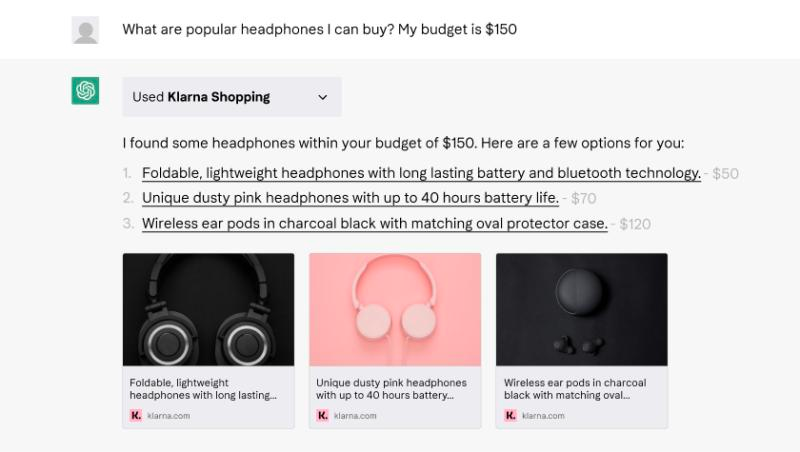

23.03.2023 ChatGPT Plugins

ChatGPT has announced the implementation of Plugins, enabling ChatGPT to connect with external applications. The first set of them was created by developers from e.g. Expedia, FiscalNote, Instacart, KAYAK, Klarna, Milo, OpenTable, Shopify, Slack, Speak, Wolfram, and Zapier. These plug-ins will gradually be made available to a small group of ChatGPT Plus developers and users.

We got the calendar out of the way… But it doesn’t end there.

2. Everyone writes about AI

The biggest proof that AI is entering the mainstream is that everyone has opinions on it. Recently, seminal texts on the topic have been published by a couple of important figures, so I feel obliged to metnion them.

Let’s start with a hefty dose of optimism by none other than Bill Gates. In his The Age of AI has begun, he describes that in his lifetime there have actually been two technological changes that he believes were revolutionary. The first was the introduction of the graphical user interface, which made modern operating systems like Windows possible. The second is what we’re seeing now – it’s the development of artificial intelligence of the GPT model created by OpenAI, which scored the best on the Advanced Placement biology exam. Gates believes that the development of artificial intelligence is as fundamental as the creation of the microprocessor, the personal computer, the Internet, and the cell phone, and will change the way people work, learn, travel, receive health care, and communicate with each other.

Yuval Noah Harari, author of “Sapiens,” in his text You Can Have the Blue Pill or the Red Pill, and We’re Out of Blue Pills, meanwhile, worries about the potential risks of artificial intelligence (A.I.), especially large language models such as GPT-4. He argues that A.I. systems should be safety-tested and introduced into society at a pace that allows culture to safely absorb them. Harari fears that A.I. could quickly control and manipulate human culture, leading to a world where non-human intelligence shapes stories, melodies, laws, and tools. In doing so, he warns that A.I. could lock humanity into a Matrix-like world of illusion, without shooting anyone or implanting chips in brains. Harari, therefore, urges world leaders to improve institutions and learn to master A.I. before it masters us, so that we can realize the promised benefits of A.I. while avoiding potential dangers.

And finally, to put the icing on the cake – as I was writing this, an episode of Lex Friedman’s podcast appeared, to which this extremely popular host has invited Sam Altman – CEO of OpenAI. I haven’t had a chance to listen to the whole thing yet (the interview is two and a half hours long), but I’m already looking forward to it.

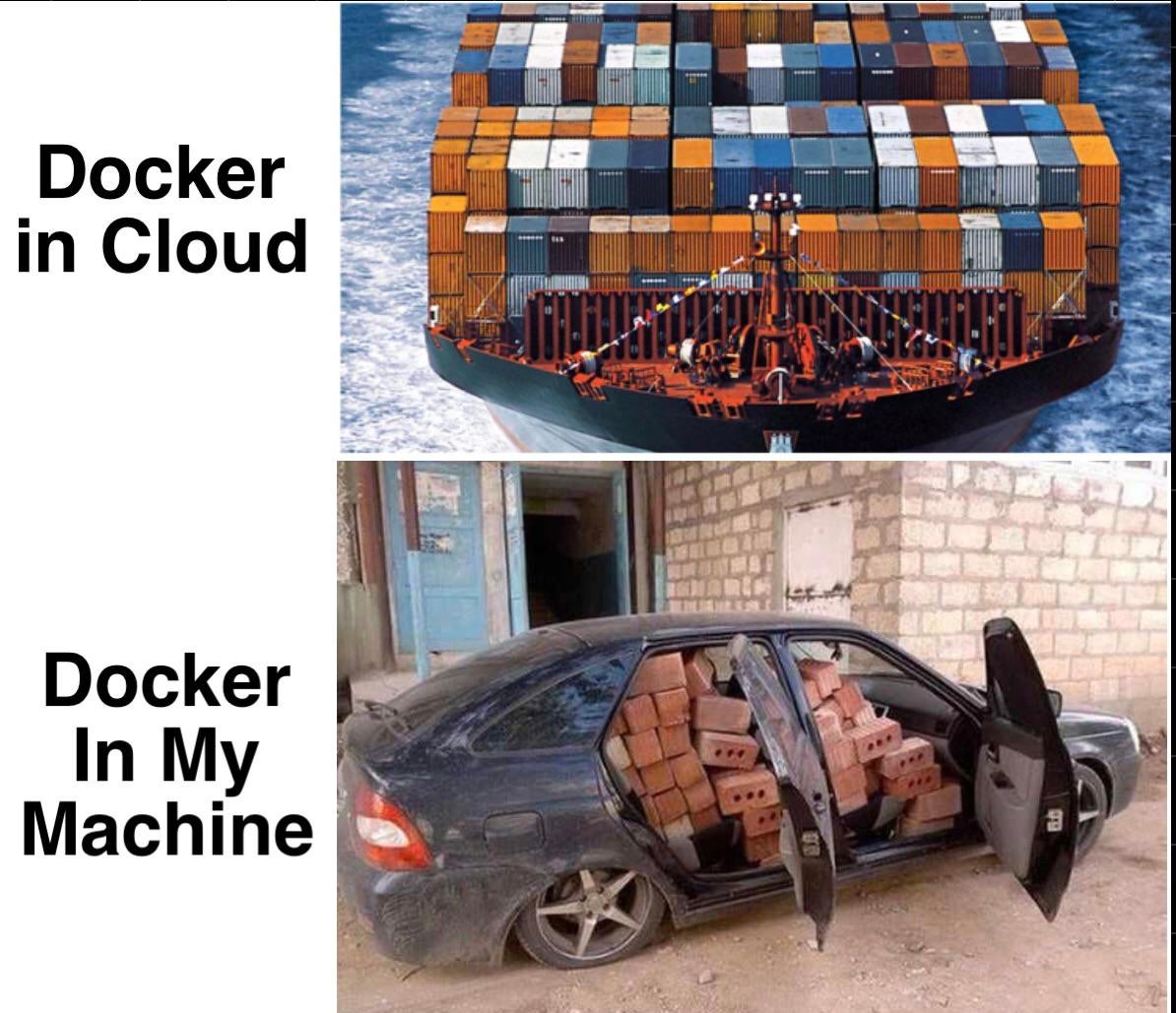

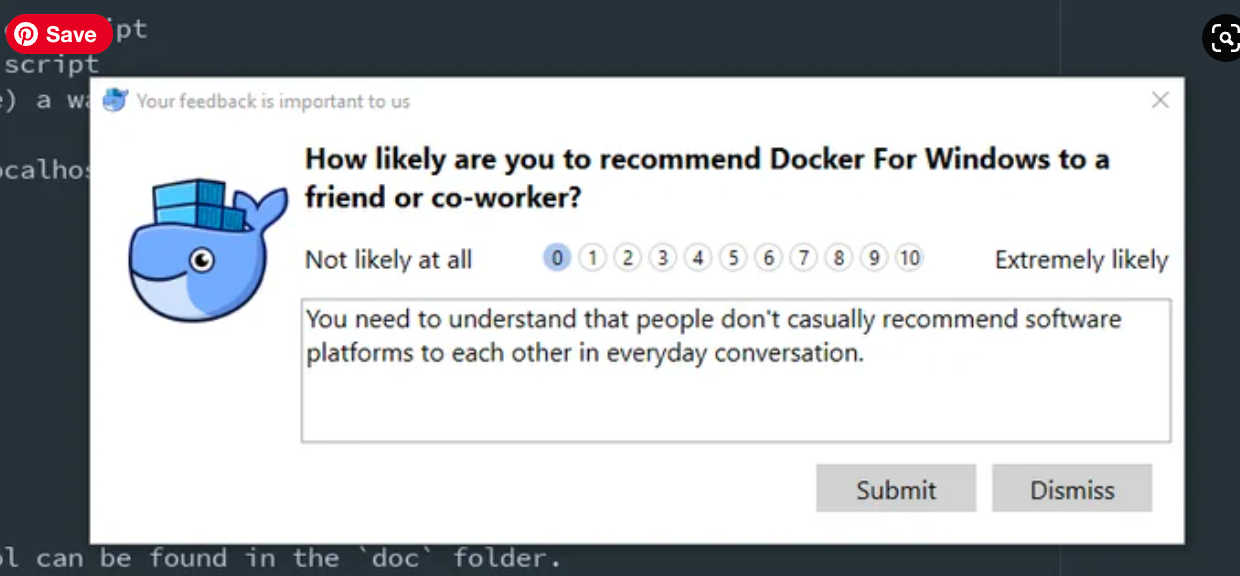

3. Docker’s new controversies (and new features)

To change the topic a bit, at the end we will talk about Docker.

Last week, Docker caused a stir in the open-source community by announcing it was ending Free Team subscriptions. Critics read this as “pay up or lose your data,” but Docker quickly retorted – apologizing and claiming that the change did not imply any nefarious plans against open-source projects to force them to pay.

The company argued that the Free Team tier was being used by various types of customers and users, but it didn’t serve any of them particularly well. Docker was trying to push customers out of this tier, but not to force open-source projects to pay $420 a year. The idea was to convert open source projects to the Docker-sponsored open source program, which offers better benefits than the Free Team level, such as no rate limits, improved visibility on Docker Hub, and better data analysis.

However, after “receiving feedback and consulting with the community,” Docker decided to reverse its decision to discontinue the Free Team plan. Customers on the Free Team plan no longer have to move to another plan before April 14, and customers who upgraded their subscriptions between March 14 and today’s announcement will receive a full refund. Customers who chose to migrate to the Personal or Pro plan will remain on their current Free Team plan or can open a new account through the website. Docker also encourages open-source projects to apply for the Docker-Sponsored Open Source (DSOS) program.

However, you have to give Docker credit for the fact that last week brought more than just controversy over subscription tiers. Indeed, the company has entered into a partnership with Ambassador Labs to simplify Kubernetes development and testing with Telepresence for Docker. This option allows developers to use Telepresence in conjunction with Docker tools, connecting local development machines to remote dev clusters and staging on Kubernetes. This method allows developers to easily iterate over code locally, test the effects of those changes in the context of a distributed application, and collaborate effectively.

Telepresence for Docker works by running a network traffic manager pod in Kubernetes and Telepresence client deamons on developers’ workstations. The network traffic manager acts as a two-way network proxy, intercepting connections and routing traffic between the cluster and the containers running on the developers’ machines.