The main course of today’s edition is a look at what the language development process looks like under the hood – using Scala developers as an example. However, we’ll also discuss Red Hat’s increased involvement in Eclipse Temurin and the dependency management process.

1. Eclipse Temurin gets commercial support from RedHat

Let’s start with the only major news story of the past week – Eclipse Temurin, the OpenJDK build being developed by Adoptium, has been officially adopted (hehe) by another player – for RedHat (involved in the project from the very beginning) has announced official commercial support for the initiative. For many of you, there have probably been some strange names here, so I hasten to provide a reminder and a little glossary.

Probably most are familiar with the AdoptOpenJDK project, which came about as a result of the efforts of the community fighting for a “free” JDK implementation when Oracle made Oracle JDK payable for commercial use a few years ago. AdoptOpenJDK was initially developed by the Java User Group community from around the world, eventually coming under the wing of the Eclipse Foundation. As a result, AdoptOpenJDK was rebranded as Eclipse Adoptium (JDK is a trademark owned by Oracle) and the Adoptium Working Group was formed.

It includes many companies involved in Java development, like the aforementioned RedHat, and the main artifacts of the group’s work are:

- Eclipse Temurin – the official OpenJDK distribution of the group, but also all the infrastructure for building and coordinating releases.

- Eclipse AQAvit – a large set of quality tests covering function, safety, performance and durability, and defines the quality criteria required by “corporate customers.”

- Eclipse Temurin-Compliance ensures that Eclipse Temurin binaries are compliant with the Java SE specification.

Returning to RedHat, it has had its own OpenJDK for many years, but now intends to focus on Temurin. This means that the company’s customers can count on official support for this release, and it’s one that is expected to be available in all of the company’s products.

Of course, Redhat is not alone in this initiative, and recently a mass of energy has also been put into developing the Java ecosystem by Microsoft. It’s doing this through membership in the aforementioned working groups (be it Adoptium or Jakarta EE), but also through the best possible support of Java in the Azure cloud. The company seems to be keen to get the word out about the whole collaboration, as the Spring Framework’s communication channels featured a guest article by Microsoft’s Julia Liuson this week, summarizing Microsoft’s entire array of activities in this area. If you’re an Azure user, it’s worth a peek, plus I consider these “crossover episodes” as interesting collaborations taking place in the ecosystem.

Sources

- Red Hat expands support for Java with Eclipse Temurin

- Microsoft is committed to the success of Java developers

2. What do you have in your dependencies? And how do you verify it?

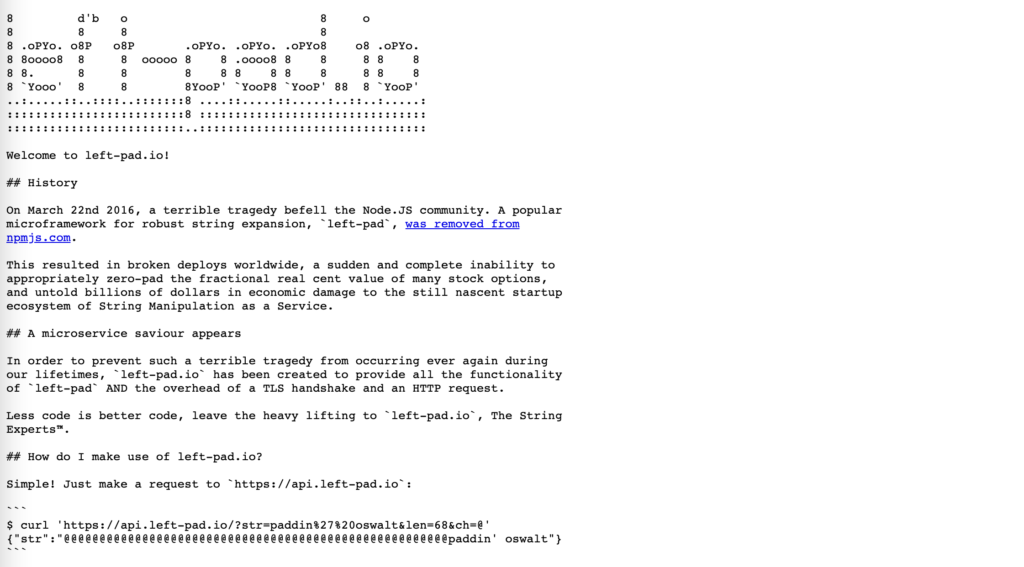

Well, and as far as news goes, the above Red Hat has exhausted our pool. But not by news alone does a man live – sometimes (and I would say, disturbingly often) what gets one’s attention is not the latest JDK version, but compatibility or (gulp) security problems with external dependencies. My impression is that in this regard our industry moves between two extremes – projects either have very strict rules of the “tribal elders who haven’t seen the source code in ten years must agree to bump up Spring’s minor” to “left-pad? And why write it from scratch when you can use an external library from an internet random person that has 10 lines?”

Fortunately, there is also a third way, which is to take a conscious and reasonable approach to the subject of dependencies. I realize that every project will have to tune somewhere how much “on a bleeding edge” it wants to remain. However, if you are looking for some best practices to help achieve the desired balance, I recommend the text Best practices for managing Java dependencies from snyk.io on this very topic. Snyk is a widely known platform that provides security support in development builds (including e.g. dependency scanning). The publication in question, however, presents a set of suggestions worth implementing in any project.

In the text you will find a lot of good tips on how to easily check the support level of the libraries you are using, whether your packages need to be updated, and which plugins for Maven and Gradle to use to facilitate this process. There is, of course, a bit of content marketing, as you will also find Synk among others, but even those who do not intend to add a new tool to their toolchain will find some interesting advice.

Sources

3. How to test compilers and design the release cycle? Find out with the example from Scala

And finally it will be about Scala – in a really unique context. For I have two texts for you, allowing you to look under the hood of the core team developing the language itself.

Sometimes we forget this, but a compiler is not a magic box that turns code into a runnable application, but also a program in itself. This means that it is worked on by development teams that have their own Jira, Sprints and generally use normal software development methodologies. That’s why, for example, each new Java release has its own specific <a href=”https://openjdk.org/projects/jdk/19/”>milestones</a>, required to stabilize the whole thing with appropriate testing periods. The whole must also be built somewhere.

We started today’s edition to Temurin’s Eclipse, which provides (in addition to the OpenJDK variant itself) also the infrastructure for building such. For those curious about how this kind of process works in other languages as well, here comes a good chance to read about Scala’s “behind the scenes”. Indeed, the team responsible for the Scala compiler at VirtusLab has shared a Case Study describing their approach to compiler regression testing. However, while Java focuses on meeting the formal specification and the so-called TCK (Technology Compatibility Kit), Scala’s developers go a step further by testing their final product on real open-source projects, so they gain confidence that the changes they’ve made won’t mess up the ecosystem.

One of my favorite “laws” of software development is the so-called Hyrum’s Law, also referred to as “Murphy’s Law for APIs.” It reads as follows:

With a sufficient number of users of an API, it does not matter what you promise in the contract: all observable behaviors of your system will be depended on by somebody.

I have a feeling that Scala developers have taken them firmly to heart and are working in exactly the same spirit. For anyone interested in the internals of development tools, I highly recommend reading the case study itself, as it provides a rather unique insight into the process of testing such a critical component of the language ecosystem as the compiler.

And while we’re on the subject of Scala, its long-term support plans were recently released. From that publication, you can learn which releases will get constant support, which will no longer be developed, and which should be abandoned in turn. This is because Scala developers are introducing two types of builds – Scala LTS (with three years of support) and Scala Next, which will be quite separate from each other, while allowing relatively simple migration. Even if you don’t use Scala, this is again a piece of interesting reading – as the text goes firmly into the nuances of what sits in the minds of the language’s developers as they think about long-term support for specific versions.

Sources

- Long-term compatibility plans for Scala 3

- Prawo Hyruma

- How to prevent Scala 3 compiler regressions with Community Build

PS: No JVM Weekly next week – I’m on vacation 🏝