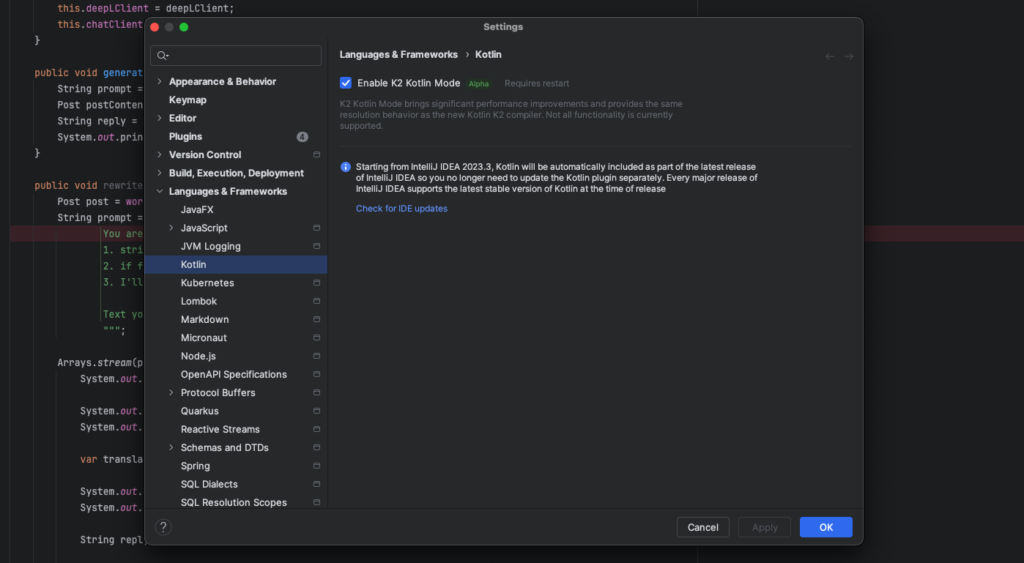

1. JetBrains enables testing of K2 Mode in IntelliJ Idea

We’ll start with the big news from JetBrains. From version 2024.1 (which Ultimate subscription holders can test), their flagship IntelliJ IDEA will offer an optional K2 mode. The IDE will therefore now have two separate modes: classic mode (enabled by default), where the standard Kotlin compiler (for Kotlin 1.x) is used to analyse code, and K2 mode, where the new K2 compiler coming from Kotlin 2.0 is used. As K2 was de facto written from scratch, the inclusion of its mode in the IDE is expected to bring not only performance improvements and improvements to the internal tooling architecture, but also ensures compatibility with future Kotlin features. Support for K2 mode in IntelliJ IDEA 2024.1 includes code highlighting and completion, navigation, usage search, debugging, refactoring and basic editing features.

To make it a bit clearer: K2 mode only apply to code analysis in the IDE and do not depend on the Kotlin compiler version specified in the project build settings. To compile a project using the K2 compiler, this still needs to be specified in the project build settings. Kotlin Multiplatform projects, Android projects, some types of refactoring and debugging tools, and code analysis in .gradle.kts files, as well as other minor features, are not yet supported in K2 mode. Support for missing features will be added in upcoming releases.

That’s not all of the news, however, as JetBrains has also shared plans for the development of their flagship Kotlin framework, Ktor. This one, in 2024, plans to introduce a mass of new features and improvements, including an OpenTelemetry plugin that will enable the generation of telemetry data such as metrics, logs and general tracing. In addition, there will be gRPC integration with the Ktor client and server via an idiomatic Kotlin implementation, making it easier to create and use gRPC-based services. We can also expect transaction management via an official plugin that will initiate a transaction at the start of a request and validate it at the end unless errors occur, simplifying database access. Also announced was support for a new IO implementation, Kotlinx-io, which already Ktor 3.0.0 will replace the existing solution. Kotlinx-io is based on Okio and is a multiplatform solution, which will make it easier for library developers to support Ktor. A plugin registry is also planned, allowing plugins created by the community to be registered.

The last part of announcement is that Dependency Injection will be simplified by officially adding DI support to the Ktor server in 2024 and publishing guidelines on how best to integrate existing DI libraries. Ktor will be modified to support DI and integrate existing DI frameworks. This announcement caused a mass of controversy, so much so that the developers had to publish a followup in which they stressed that the use of a DI framework in Ktor will never be required, and Ktor itself will never include an embedded DI framework as part of its design. The proposed functionality will only be for users who want to link DI to their Ktor services, and aims to integrate existing DI frameworks as seamlessly as possible into the framework.

And while we’re on the subject of Dependency Injection, Helidon has announced a new feature, which is their own approach to dependency injection based on the JSR-330:Dependency Injection for Java standard. The core of Helidon Injection is an annotation processor that searches for standard jakarta/javax annotations in application code. When such annotations are found in a class, Helidon Injection initiates the creation of a so-called Activator for the class/service, managing its lifecycle. For example, the presence of an annotation @Inject or @Singleton in a class FooImpl results in the creation of a FooImpl.injectionActivator, aggregating the services for the module in question, allowing them to be registered in the “service registry” (which is actually itself a class generated by the Annotation Processor), and thus later easily located and initialised.

Generated(value = "io.helidon.inject.tools.creator.impl.DefaultActivatorCreator", comments = "version = 1")

@Singleton @Named(Injection$Module.NAME)

public class Injection$Module implements Module {

static final String NAME = "inject.examples.logger.common";

@Override

public void configure(ServiceBinder binder) {

binder.bind(io.helidon.inject.examples.logger.common.AnotherCommunicationMode$injectionActivator.INSTANCE);

binder.bind(io.helidon.inject.examples.logger.common.Communication$injectionActivator.INSTANCE);

binder.bind(io.helidon.inject.examples.logger.common.DefaultCommunicator$injectionActivator.INSTANCE);

}

}

This approach also makes option to provide ‘lazy’ service activation based on the current demand of a particular application – a particular service can remain dormant until it is needed, which of course involves better resource efficiency and improved application performance. It also introduces the possibility of extensive integration and extensibility – Helidon Injection allows, among other things, the generation of interceptor service code via meta-annotations InterceptedTrigger.

2. Some follow-ups to JDK 22 release

The topic of the release of JDK 22 is not going away because, as usual, this type of release is accompanied by several publications from the community. Therefore, I have two for you today, somewhat as a follow-up to last week’s edition.

Let’s start with a publication by Sean Mullan, who as usual shared some security updates on new JDK. There aren’t any revolutionary changes here – among the main new features are the addition of several new root CA certificates, the introduction of the java.security.AsymmetricKey interface, improvements to support for RSA signature algorithms in the XML version using SHA-3 hashes, and support for the HSS/LMS signature algorithm in the keytool and jarsigner tools. In addition, JDK 22 makes it easier to manage the maximum length of TLS certificate chains for client and server through new system properties.

Let’s move on to the topic of performance. The second text that caught my attention was the publication of How fast is Java 22? from Lukáš Petrovický of timefold.ai, a project dealing with broad scheduling for business, such as employee shifts and vehicle bookings. At the heart of the project is the Timefold Solver engine, which is responsible for its core logic, and it was this engine that Lukáš used to test the performance of JDK 22 and GraalVM for JDK 22. The tests were designed to verify that the Timefold Solver code still runs smoothly on Java 22 and that the performance is at least as good as before. As is usually the case with benachmarks, they should be looked at with a slight pinch of salt, but Lukáš was doing them for his own purposes, so I expect he approached the subject unbiased, with due diligence.

In the results of microbenchmarks (performed using Java Microbenchmark Harness, JMH) and real-world tests, it was noted that the performance of OpenJDK 22 compared to Java 21 was unchanged, with the exception of one test that required further analysis. In contrast, GraalVM for JDK 22 (even without the use of native images) showed a significant improvement in performance, with an average of around 5% and a maximum of around 15% in specific benchmarks, making it an attractive option for applications using Timefold Solver. These results indicate that the move to Java 22 will not affect performance changes, but using GraalVM for JDK 22 can yield significant performance improvements of up to 10%. Interestingly, contrary to general trends, the Timefold.ai developers rely on classic ParallelGC instead of the default G1 for its better performance in processing, not latency.

Well I will say, more points for GraalVM.

3. Release Radar

Java Mission Control 9.0

For those not familiar with the project, Java Mission Control (JMC) is a tool for monitoring, managing and profiling Java applications with the premise of not introducing significant time delays in application performance, making it easier to rationalise decisions to run it in a production environment. By integrating with the JDK, JMC offers access to a rich range of Java application performance and behaviour data through the analysis of Java Flight Recorder (JFR) records, enabling users to both collect real-time performance data and view and analyse historical data.

Last week saw the release of JDK Mission Control 9.0, which requires a minimum of the previous LTS, JDK 17, to function, while still supporting JFR record parsing from JDK version 7u40+ (Java compatibility never ceases to impress me). The JMC 9 dark theme (for all you night marks) introduces compatibility with Eclipse 4.30, support for Linux aarch64, as well as improvements to the JFR parser and a reorganisation of the class, allowing better integration with third-party applications. Significant changes also include new rules for evaluating performance and resource usage, improved visualisation in the form of a Java-based flamegraph, and the elimination of the Twitter plugin (I was surprised that one existed at all) due to API changes and maintenance costs.

Gradle 8.7

Gradle 8.7 introduces a number of improvements and new features, including support for projects using Java 22, although interestingly Gradle 8.7 itself does not yet run this version of Java due to its lack of support in Groovy.

In terms of functionality, a new feature is the improved use of a local or remote build buffer for compiling Groovy scripts, avoiding recompilation and reducing the first build time of a project. There is also a better API for updating collection properties and improvements to error and warning reporting, which makes it easier to identify and resolve issues related to plugins, among other things. The rest of the changes relate to cache configuration improvements, the introduction of new features in the Kotlin DSL (including updates to Kotlin 1.9.22), and improvements to TestNG support and file system symlink management.

Apache Pekko becomes Top Level Project Apache Foundation

Apache Pekko was created as an alternative to the popular Akka framework, which is widely used to create reactive applications in Scala and Java. Apache Pekko development was also accelerated by a change in the Akka licence, which caused uncertainty among the development community about the future access and use of the framework in commercial projects. The main goal of the creation of Apache Pekko was to offer similar functionality to Akka, but under the aegis of the Apache Software Foundation, which guarantees the openness and accessibility of the project to a wider range of developers. The project has now been awarded Top Level Project status.

The achievement of Top Level Project (TLP) status by Apache Pekko is an acknowledgement of its value and stability as a reactive application development tool. TLP status in the Apache Software Foundation (ASF) ecosystem means that the project has undergone a comprehensive incubation process, demonstrating its viability, ability to evolve independently and maintain an active community. For Apache Pekko, being a TLP not only means prestige and recognition in the open-source community, but also greater independence and opportunities for growth. The project can now enjoy the full support of the foundation, managing its own infrastructure, processes and policies, reinforcing its position as a key tool for developers looking for an alternative to Akka in building efficient and scalable reactive applications.